Ceph monitoring

In case of usage of Virtual Appliance- Use local account lpar2rrd for hosting of STOR2RRD on the virtual appliance

- Use /home/stor2rrd/stor2rrd as the product home

Ceph version 14.2 (Nautilus) and newer are supported.

Enable Prometheus export module

ceph mgr module enable prometheusEnable Pool metrics

Selected pools

ceph config set mgr mgr/prometheus/rbd_stats_pools "pool1,pool2,poolN"All pools

ceph config set mgr mgr/prometheus/rbd_stats_pools "*"Enable performance counters in Prometheus

With the introduction of ceph-exporter daemon, the Prometheus module will no longer export Ceph daemon perf counters as prometheus metrics by default.

However, one may re-enable exporting these metrics by setting the module option exclude_perf_counters to false:

ceph config set mgr mgr/prometheus/exclude_perf_counters falseConfigure frewall

Enable access to Prometheus export module from LPAR2RRD appliance.

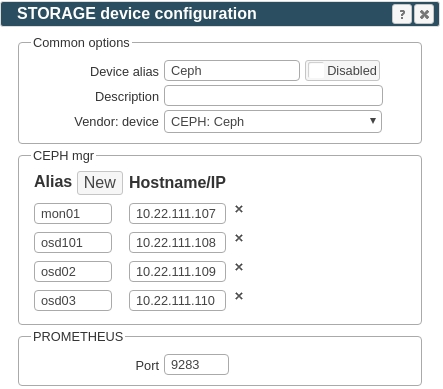

By default module accepts HTTP requests on port 9283.

Check connectivity:

$ perl /home/stor2rrd/stor2rrd/bin/conntest.pl <CEPH MGR IP> 9283 Connection to "<CEPH MGR IP>" on port "9283" is ok

STOR2RRD configuration

- Create new storage in STOR2RRD

Settings icon -> Storage -> New -> Vendor:device -> CEPH : Ceph

Configure hostnames/IPs

If you are using a load balancer translating hostname to an active manager node, then you can fill in only the load balanced hostname.

Otherwise, fill in IP addresses/hostnames of all CEPH manager nodes which can host the Prometheus module.

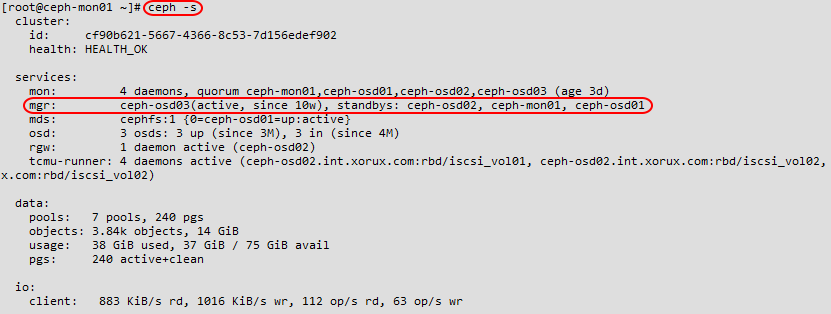

You cen get a list of manager nodes from "ceph -s" command.

- Schedule to run storage agent from stor2rrd crontab (lpar2rrd on Virtual Appliance, it might already exist there)

Add if it does not exist as above

$ crontab -l | grep load_cephperf.sh $

Assure there is already an entry with the UI creation running once an hour in crontab$ crontab -e # Ceph 0,5,10,15,20,25,30,35,40,45,50,55 * * * * /home/stor2rrd/stor2rrd/load_cephperf.sh > /home/stor2rrd/stor2rrd/load_cephperf.out 2>&1

$ crontab -e # STOR2RRD UI (just ONE entry of load.sh must be there) 5 * * * * /home/stor2rrd/stor2rrd/load.sh > /home/stor2rrd/stor2rrd/load.out 2>&1

-

Let run the storage agent for 15 - 20 minutes to get data, then:

$ cd /home/stor2rrd/stor2rrd $ ./load.sh

- Go to the web UI: http://<your web server>/stor2rrd/

Use Ctrl-F5 to refresh the web browser cache.